Developing agents and multi-agent systems seems to be more about a lone-wolf developer standing up a whole fleet of agents (cue your favourite OpenClaw story).

But what if as an organisation you want to have a multi-agent system built with developers working across different teams?

In that scenario, all the agents you need in your multi-agent system (MAS) are not going to show up all at once in perfect sync. For a (hopefully) short time it will appear fragmented before the agents start coming online giving it some shape.

This post is about how you can stub out agents to ensure teams are decoupled.

This post is divided into two sections. The first one describes what a stub can look like and the other how these stubs fit based on specific orchestration scenarios.

Code and results can be found here.

Types of Stubs

Dumb Stub

This type of stub is just a bridge over the gap. The primary benefit is to ensure you can test any kind of routing and orchestration to this agent (not from it). This also allows you to see the shape of your multi-agent system and ensure you have placeholders to map on-paper architecture to code.

class StubAgent(BaseAgent): def __init__(self, name:str, description:str): super().__init__(name=name, description=description) override async def _run_async_impl(self, ctx: InvocationContext) -> AsyncGenerator[Event, None]: pass

Usage: The name an description are minimum bits of information you need to agree with the team responsible for the agent build. This must be done as a part of the MAS design and agent-to-skill mapping.

Deterministic Stub

We may want to create a deterministic stub for the situation where we know the major scenarios we want to stub for and can create a rule-based decision tree. For example, in case these agents are part of a sequence and are taking in structured input and producing structured output. Such rule-based stubs will then be replaced by a flexible/robust understanding and decisioning system (e.g., LLM, ML-model) in production.

Usage: First decide what scenarios you want to use the stub based on the requirements for the agent. Decide whether you want to test the happy path or the unhappy path or both. The problem to solve then is to create some simple rules that map the input to specific outputs. This will require coordination with the team developing the actual agent and the goals/tasks assigned to the agent. The stub can also be used to support session state update testing (plumbing for the system).

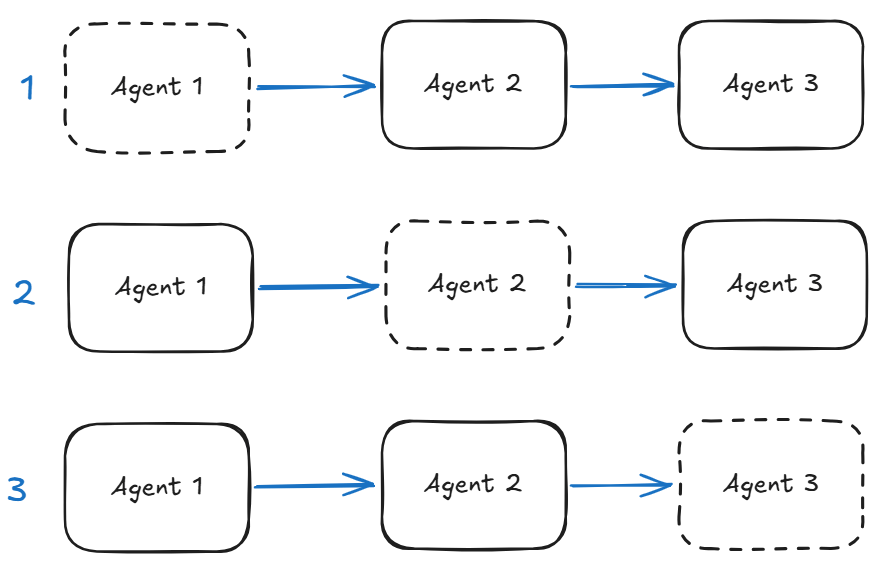

In the figure below if we are missing an agent in a Sequential workflow that we need to stub then we have three scenarios.

- Agent missing at the start of the flow – the stub will need to create output that drives the rest of the flow. This can be done based on specific flow scenarios (e.g., customer details passed in a structured format). This is a critical stub as it can either protect the downstream agents or push them off track.

- State: the stub can be used to initialise session state.

- Agent missing in the middle of the flow – the stub will need to deal with input from the upstream agent as well as produce output to continue the scenario. We need to localise the behaviour of the stubbed agent.

- State: the stub can be used to propagate state changes downstream aligning with the specific use-case.

- Agent missing at the end of the flow – this stub needs to capture the end state of the flow for whatever is waiting at the other end. We have to be careful as these types of stubs can misrepresent the entire flow.

- State: the stub can be used to finalise state change at the end of the flow.

Basic variant of the Deterministic Stub is shown below with all the different ‘action’ options:

- Just generate some content based on a rule (append ‘Hello world’).

- Update some state variable (hop count).

- Indicate a transfer to another agent.

class DeterministicStubAgent(BaseAgent): integration: str = "Stub_Agent_Integration" def __init__(self, name: str, description: str, sub_agents=[]): super().__init__(name=name, description=description) override async def _run_async_impl(self, ctx: InvocationContext) -> AsyncGenerator[Event, None]: # Get the input message from the context logger.info(f"{self.name} received context: {ctx}") input_message = ctx.user_content.parts[0].text if ctx.user_content and ctx.user_content.parts else "" print(f"Received input message: {input_message}") # Add "Hello world" to the message # Put your custom code here... modified_message = f"Hello world {input_message}" print(f"Modified message: {modified_message}") hop_count = ctx.session.state.get("hop_count", 0) state_delta = {"hop_count": hop_count + 1 } invocation_id = f"{hop_count}_{random.randint(1000, 9999)}" if hop_count>=2 and hop_count<5: # Transfer to another cheaper agent after 2 hops action = EventActions(state_delta=state_delta,transfer_to_agent=self.integration) event = Event( invocation_id=invocation_id, author=self.name, content = Content(role="assistant", parts=[Part(function_call={"name": "transfer_to_agent", "args": {"agent_name": self.integration}})]), actions=action) elif hop_count>=5: # Complete the turn for this agent. print("Turn completed") action = EventActions(state_delta=state_delta,turn_complete=True) event = Event( invocation_id=invocation_id, author=self.name, content = Content(role="assistant", parts=[Part(text=modified_message)]), actions=action) else: # Update state change only. action = EventActions(state_delta=state_delta) event = Event( author=self.name, content = Content(role="assistant", parts=[Part(text=modified_message)]), actions=action) # End of custom code: Remember to yield an event! yield event

Intelligent Stub

This is when you find it difficult to create code that maps inputs to outputs but still need to stub out at the comprehension behaviour of the agent.

Usage: Ensure you focus on the inputs and outputs while stubbing out the comprehension aspect of the real agent. Be careful you do not add decisioning behaviours to the stub otherwise you will create a system that is tuned to the stub behaviour and may behave differently when the stub is replaced with the real agent.

You are able to relate the input with the output using one of the methods below:

- some sort of semantic search (e.g., vector search) where the index gives semantic mapping to the test output to use and ML-model is used to understand the input.

- prompt a light-weight LLM to understand the input and map to one of the pre-set outputs without exercising its own decisioning capabilities.

Example

I show an example of how such an ‘intelligent’ stub can be developed using the first approach (semantic search). For this we have used a set of ‘key intents’ aligned with the use-case, an embedding model to simulate the comprehension of the agent and a simple similarity score to surface the intent based on the input.

class IntelligentStubAgent(BaseAgent): model: SentenceTransformer = SentenceTransformer('all-MiniLM-L6-v2') key_intents: list[str] = ["Integration", "Differentiation", "Algebra", "Geometry", "Trigonometry"] encoded_intents: list[np.ndarray] = [] def __init__(self, name: str, description: str, sub_agents=[]): super().__init__(name=name, description=description) self.encoded_intents = [self.model.encode(intent) for intent in self.key_intents] override async def _run_async_impl(self, ctx: InvocationContext) -> AsyncGenerator[Event, None]: # Get the input message from the context logger.info(f"{self.name} received context: {ctx}") input_message = ctx.user_content.parts[0].text if ctx.user_content and ctx.user_content.parts else "" print(f"Received input message: {input_message}") # encode incoming message for similarity search encode = self.model.encode(input_message) # Just to simulate some processing # generate similarity score and select best intent similarities = [1 - util.cos_sim(encode, intent) for intent in self.encoded_intents] best_intent_index = np.argmin(similarities) best_intent = self.key_intents[best_intent_index] modified_message = f"Identified intent: {best_intent} for input: {input_message}" event = build_event( name=self.name, content=f"Detected intent: {modified_message}", state_delta={"identified_intent": best_intent}) yield event# Nothing to see here - helper method to build an event object.def build_event(name:str, content:str, turn_complete:bool=False, transfer_to_agent:str=None, state_delta:dict={})->Event: action = EventActions(state_delta=state_delta, transfer_to_agent=transfer_to_agent) invocation_id = f"{name}-{random.randint(1, 99999)}" event = Event( invocation_id=invocation_id, author=name, content = Content(role="assistant", parts=[Part(text=content)]), actions=action, turn_complete=turn_complete) return event

Conclusions

The stubs shown in this post can be used as sub-agents or within the deterministic workflows supported by ADK (looping, sequential, parallel). In the next post I will attempt to build out the deterministic workflow examples.

Below is the full example for sub-agents.

from typing import AsyncGenerator

from google.adk.agents.llm_agent import LlmAgent

from google.adk.agents import BaseAgent, InvocationContext

from google.adk.models.lite_llm import LiteLlm

from typing_extensions import override

from google.adk.events import Event, EventActions

from google.genai.types import Content, Part

import random

import numpy as np

from sentence_transformers import SentenceTransformer, util

import logging

logging.basicConfig(level=logging.INFO)

logger = logging.getLogger(__name__)

MODEL = LiteLlm(model="ollama_chat/qwen3.5:2b")

class StubAgent(BaseAgent):

def __init__(self, name:str, description:str, sub_agents=[]):

super().__init__(name=name, description=description, sub_agents=sub_agents)

@override

async def _run_async_impl(self, ctx: InvocationContext) -> AsyncGenerator[Event, None]:

print("Activating StubAgent with context:", ctx)

logger.info(f"{self.name} received context: {ctx}")

yield Event(turn_complete=True, author=self.name)

class DeterministicStubAgent(BaseAgent):

integration: str = "Stub_Agent_Integration"

def __init__(self, name: str, description: str, sub_agents=[]):

super().__init__(name=name, description=description)

@override

async def _run_async_impl(self, ctx: InvocationContext) -> AsyncGenerator[Event, None]:

# Get the input message from the context

logger.info(f"{self.name} received context: {ctx}")

input_message = ctx.user_content.parts[0].text if ctx.user_content and ctx.user_content.parts else ""

print(f"Received input message: {input_message}")

# Add "Hello world" to the message

modified_message = f"Hello world {input_message}"

print(f"Modified message: {modified_message}")

hop_count = ctx.session.state.get("hop_count", 0)

state_delta = {"hop_count": hop_count + 1

}

invocation_id = f"{hop_count}_{random.randint(1000, 9999)}"

if hop_count>=2 and hop_count<5:

# Transfer to another cheaper agent after 2 hops

action = EventActions(state_delta=state_delta,transfer_to_agent=self.integration)

event = Event( invocation_id=invocation_id, author=self.name, content = Content(role="assistant", parts=[Part(function_call={"name": "transfer_to_agent", "args": {"agent_name": self.integration}})]), actions=action)

elif hop_count>=5:

print("Turn completed")

action = EventActions(state_delta=state_delta,turn_complete=True)

event = Event( invocation_id=invocation_id, author=self.name, content = Content(role="assistant", parts=[Part(text=modified_message)]), actions=action)

else:

action = EventActions(state_delta=state_delta)

event = Event( author=self.name, content = Content(role="assistant", parts=[Part(text=modified_message)]), actions=action)

yield event

class IntelligentStubAgent(BaseAgent):

model: SentenceTransformer = SentenceTransformer('all-MiniLM-L6-v2')

key_intents: list[str] = ["Integration", "Differentiation", "Algebra", "Geometry", "Trigonometry"]

encoded_intents: list[np.ndarray] = []

def __init__(self, name: str, description: str, sub_agents=[]):

super().__init__(name=name, description=description)

self.encoded_intents = [self.model.encode(intent) for intent in self.key_intents]

@override

async def _run_async_impl(self, ctx: InvocationContext) -> AsyncGenerator[Event, None]:

# Get the input message from the context

logger.info(f"{self.name} received context: {ctx}")

input_message = ctx.user_content.parts[0].text if ctx.user_content and ctx.user_content.parts else ""

print(f"Received input message: {input_message}")

# Add "Hello world" to the message

encode = self.model.encode(input_message) # Just to simulate some processing

similarities = [1 - util.cos_sim(encode, intent) for intent in self.encoded_intents]

best_intent_index = np.argmin(similarities)

best_intent = self.key_intents[best_intent_index]

modified_message = f"Identified intent: {best_intent} for input: {input_message}"

event = build_event( name=self.name, content=f"Detected intent: {modified_message}", state_delta={"identified_intent": best_intent})

yield event

def build_event(name:str, content:str, turn_complete:bool=False, transfer_to_agent:str=None, state_delta:dict={})->Event:

action = EventActions(state_delta=state_delta, transfer_to_agent=transfer_to_agent)

invocation_id = f"{name}-{random.randint(1, 99999)}"

event = Event( invocation_id=invocation_id, author=name, content = Content(role="assistant", parts=[Part(text=content)]), actions=action, turn_complete=turn_complete)

return event

instruction = """

You are an autonomous agent that takes a complex maths problem and breaks it down into smaller steps to solve it.

You have access to a set of agents for each branch of maths.

"""

stub_agent_1 = StubAgent(name="Stub_Agent_Integration", description="Agent that can do Integration problems")

stub_agent_2 = DeterministicStubAgent(name="Stub_Agent_Differentiation", description="Agent that can do Differentiation problems")

stub_agent_3 = IntelligentStubAgent(name="Stub_Agent_Intelligent", description="Agent that can identify the branch of maths")

root_agent = LlmAgent(name="Root_Agent", description="Root agent for handling conversation and classification of problem", instruction=instruction, model=MODEL, sub_agents=[stub_agent_1, stub_agent_2, stub_agent_3])Output:

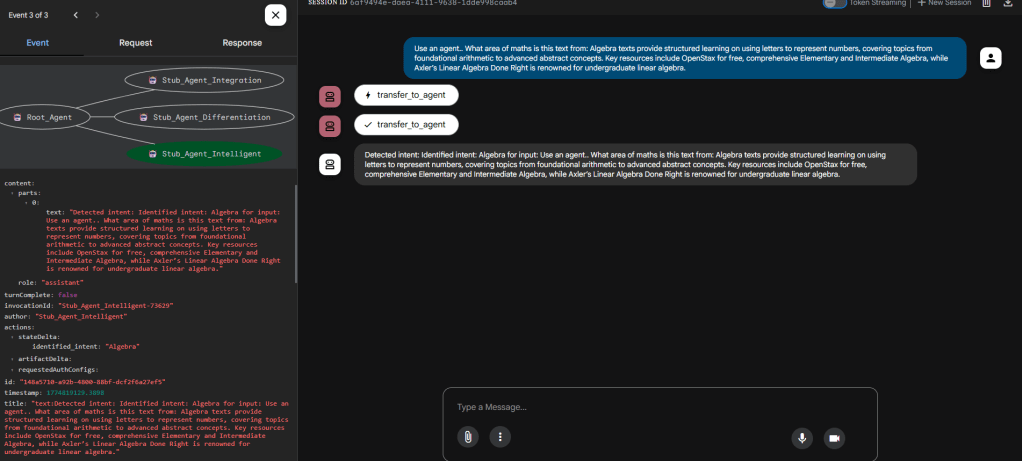

Intelligent Stub in action

In the above the intent has been correctly identified and now can be used to pull out a specific response from a test list. ADK web tracing shows that the Intelligent stub was called.

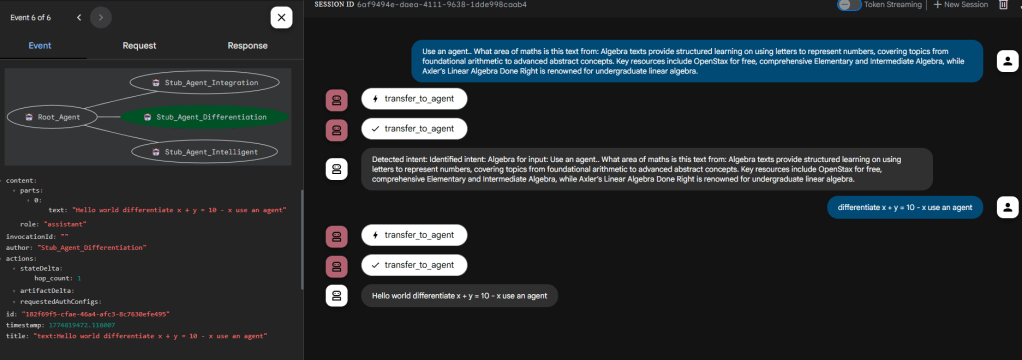

Deterministic Stub in action

In the above the flow has been directed to the deterministic agent which as appended ‘Hello world’ to the output correctly. Confirmed using the ADK web tracing.

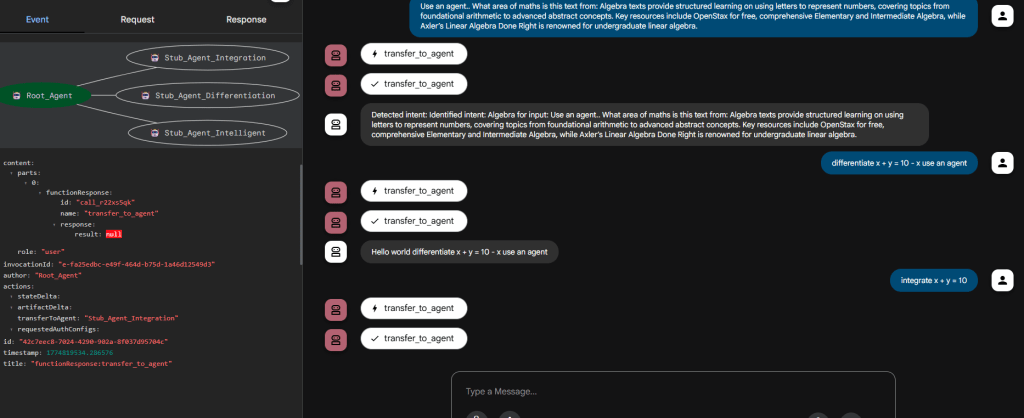

Dumb Stub (not) in action

In the above we don’t see anything interesting (given this is a Dumb stub) except that the Root Agent correctly routed to it based on the description provided to the dumb stub. This can be extremely useful when you have a large set of sub-agents and not all of them are available to test the routing result of your root agent instructions.